TL;DR

Table of Contents

AI policy in the region

The United States has been experiencing an incredible amount of activity related to AI regulation. Much of that occurred in 2025 at the state level, as the likelihood of federal-level regulation largely evaporated. Between January and June 2025, over 1,000 bills — nearly all state-level — entered the legislative process. A massive consideration that loomed over state legislation was the U.S. Congress’s proposal, as part of the 2025 budget reconciliation process, to implement a 10-year moratorium on certain state-level regulation of AI. This moratorium was removed from the legislation prior to both chambers of Congress passing the bill in early July. (While outside this report’s timeline, it is worth noting that similar language reappeared in America’s AI Action Plan, released in July 2025, which is not a law, so does not hold legal authority, but sets the United States’s vision for global AI leadership and innovation.)

At the national level, the current presidential administration has pushed an innovation-first approach to AI (with a few notable exceptions like the Take It Down Act in Congress). This differs from the last administration, which emphasized oversight and harm-based approaches to AI regulation. The shift in the federal approach to AI has resulted in increased state efforts to regulate the technology in the wake of the 2024 election; many state legislatures have ramped up legislative efforts to regulate AI, with a focus on balancing calls for innovation with the need to address harm. At the state level, some language repeats nearly verbatim across bills, particularly on the topics of deepfakes (especially in regard to elections), algorithmic bias and AI transparency. It is important to note that many of the approaches target “regulated industries” — like banking, healthcare or insurance — and as such would not apply directly to news and journalism.

In addition to the 50 U.S. states, territories of the United States are also developing proposals to regulate AI, like Guam with Bill No. 64-39 and Puerto Rico with SB 0068; these proposals cover early-stage regulation like creating task forces and commissions to study AI and formulate policies. Our analysis includes a variety of efforts at the national, state and territory level to demonstrate the breadth of approaches in the United States.

The increased activity across U.S. states has, at times, been met with legal pushback. For example, California’s AB 2839, which was intended to protect against deceptive deepfake content during election campaigns, was found to include unconstitutional infringements on freedom of speech and expression (e.g., satire and parody content). Legal challenges may continue across U.S. states as many are proposing legislation that is not in full alignment with recent Executive Orders.

In Canada, activity initially centered around the Artificial Intelligence and Data Act (AIDA), Canada’s comprehensive AI legislation that was introduced in 2022 as part of Bill C-27. It failed to pass through Parliament before then–Prime Minister Justin Trudeau resigned in January 2025. The AIDA took a harm-based approach to AI and encountered criticism for its opaque development and vague provisions. Without an overarching AI law, the country has looked to existing laws (e.g., national and provincial privacy laws) to regulate AI and formed the Artificial Intelligence Safety Institute in late 2024 to study AI development and risks. The country also has a Voluntary Code that emphasizes the safe and responsible development and management of generative AI systems/tools. At the provincial level, Ontario’s 2024 Strengthening Cyber Security and Building Trust in the Public Sector Act sets guidelines for public sector use of AI with an emphasis on safeguarding personal information.

By the numbers

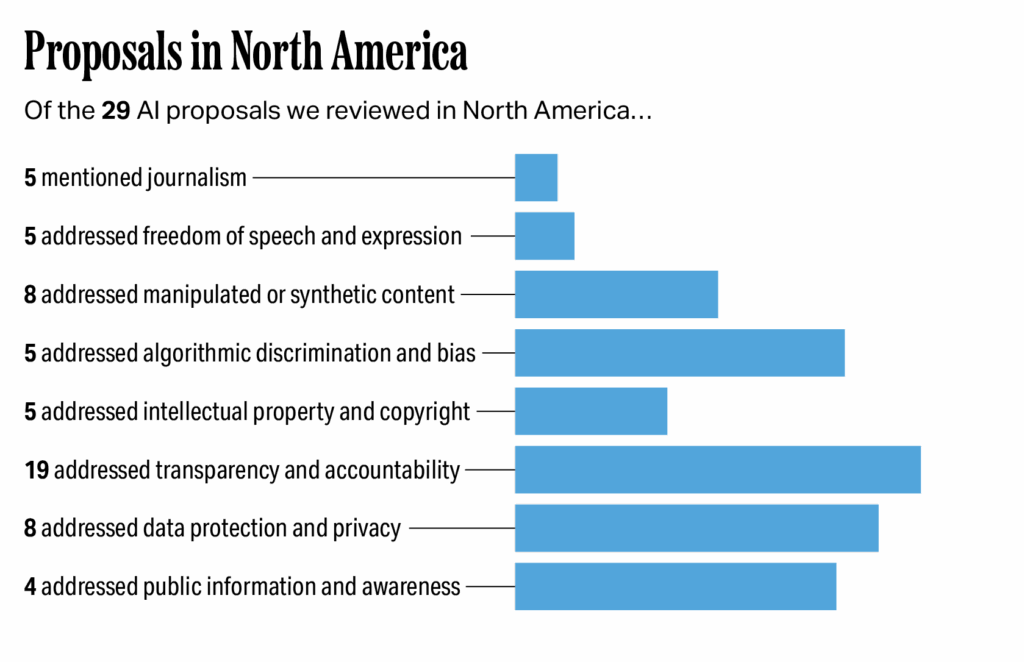

Of the 29 proposals we reviewed in North America, five specifically mentioned journalism; five addressed freedom of speech or expression; eight addressed manipulated or synthetic content; five addressed algorithmic discrimination and bias; five addressed intellectual property and copyright; 19 addressed transparency and accountability; eight addressed data protection and privacy; and four addressed public information and awareness.

Impacts on journalism and a vibrant digital information ecosystem

Public awareness and information provisions may improve journalists’ access to information, as well as their ability to share innovations — and what they mean — with the public. Much attention has been paid to transparency and accountability, with a particular focus on AI-generated content. News organizations have experience with disclosing uses of technology, and existing and proposed laws in the region that require disclosing AI-generated content are unlikely to pose a major burden. However, what kinds of disclosure are the most appropriate, effective and understandable remains an open question.

Legislation in North America has also considered algorithmic discrimination and bias, which will shape how news organizations implement AI systems for hiring. Bias audits can allow for more in-depth examinations of AI tools and algorithms which yield opportunities for journalists to (1) better understand these systems and (2) explain them to the public. Similarly, news organizations will also need to comply with new data protection provisions. These personal data protections in AI systems, while important for personal privacy, may make it more difficult for journalists to investigate algorithms and alleged misuse of AI systems.

These proposals also carry important implications for copyright and intellectual property. The North American region has still not fully agreed on how (and if) to handle compensation for digital content usage. Questions about training data and who owns the outputs of generative AI have wide-ranging implications for journalism and the digital information ecosystem. News organizations will be included in legal and legislative decisions surrounding copyright and intellectual property, which will shape compensation for digital usage and how news organizations develop and maintain their own AI systems.

Finally, we observed several approaches to freedom of speech and expression in the region. Several U.S. states’ proposals acknowledged the First Amendment of the U.S. Constitution, which is pivotal to an open and free press. Others, relatedly, call out access to technology — and AI technology in particular, as in Montana’s Right to Compute Act — as a fundamental right, which could allow journalists greater opportunity to develop journalism-specific tools to aid in reporting and investigation.

Freedom of speech and expression

AI summary: North American AI proposals address freedom of speech and expression by suggesting protections for AI company employees, asserting existing First Amendment rights and viewing access to technology as a form of free expression. These approaches could strengthen journalistic reporting by supporting First Amendment rights and offering more opportunities for news organizations to develop AI models, though there are also potential challenges and unpredictable outcomes.

Approaches

Legislation in North America does consider freedom of speech, expression and information. The proposals we examined generally fit into three categories.

- Offering suggestions that AI companies provide protections to encourage their employees to voice concerns. New Jersey’s assembly resolution urges AI companies to provide “[c]urrent and former employees … the freedom to publicly report concerns until the creation of an adequate process for anonymously raising concerns.” This resolution is not legally binding and compliance is voluntary for AI companies.

- Acknowledging policies do not impede existing protections for individual freedoms. Certain approaches in the U.S. emphasize that First Amendment rights take precedence over any clauses of newly suggested (or signed) legislation (e.g., SB24-205 in Colorado and HB 149 in Texas). For instance, Colorado’s legislation states that nothing in the law “imposes any obligation on a developer, a deployer, or other person that adversely affects the rights or freedoms of a person,” and Texas’s legislation has a similar provision saying that nothing in the chapter on AI “impose a requirement on a person that adversely affects the rights or freedoms of any person …” In essence, these bills state that the legislation is not meant to impede First Amendment rights.

- Viewing access to technology as a form of free expression. One unique approach is in Montana’s SB 212 in which “[g]overnment actions that restrict the ability to privately own or make use of computational resources for lawful purposes, which infringes on citizens’ fundamental rights to property and free expression, must be limited to those demonstrably necessary and narrowly tailored to fulfill a compelling government interest.” Also known as the Right to Compute Act, Montana’s approach asserts access to technology and computational resources as fundamental rights to residents in the state.

Impacts on journalism

These policies impact journalism in several ways. For one, there are several examples of U.S. states supporting First Amendment rights, which should help to protect and bolster journalistic reporting, though these rights are not the primary focus of the legislation. New Jersey’s assembly resolution could also make it easier for journalists to report on allegedly nefarious and dangerous practices within AI companies.

Montana’s “right to compute” approach suggests news organizations in the state may have more opportunities to develop AI models because using and building computational resources (including AI systems) are viewed as fundamental rights in this legal framework. This approach may allow for developments in AI that benefit journalism (e.g., developing novel content recommendation algorithms) by encouraging the idea that technology is a right for all. It is unlikely to be sufficient on its own: news organizations will also need extensive training and capacity building to achieve these goals. Moreover, the “right to compute” approach will likely make it more difficult to regulate the industry in other ways and may thus have wide-ranging repercussions that are difficult to predict.

Manipulated or synthetic content

AI summary: North American proposals on manipulated content aim to prevent harm by prohibiting nonconsensual intimate AI content, requiring consent for image/voice manipulation and mandating labels for deepfakes, sometimes with exceptions for satire or entertainment. And, in the United States and Canada, “false” information is protected constitutionally, subjecting policy proposals in this area to strict scrutiny. Indeed, most have been struck down prior to enactment or have quickly faced legal challenges.

These types of proposals could protect journalists from deepfake misuse and offer opportunities for using synthetic media responsibly, but they could also limit reporting if definitions of “news organization” are too narrow or if consent rules are too strict and enable unintended censorship.

Approaches

There are many examples for how North American proposals have tried to address or are considering how to address manipulated content. These generally fit into four categories.

- Prohibiting the publication of nonconsensual intimate content created with AI. Several examples, such as the U.S.’s 2025 Take It Down Act, focus on mitigating harms from manipulated content by prohibiting the publication of nonconsensual “intimate visual depictions” of adults (and any “intimate visual depictions” of minors) that are either authentic or created with the assistance of computer programs, such as deepfakes (although many states already have laws prohibiting the publication of such content). The more original part of the act also requires platforms to establish a notice-and-removal process, and platforms must remove this content within 48 hours of receiving notice.

- Requiring consent for manipulating an individual’s image or voice. This approach protects an individual’s image and likeness from being used without authorization and provides property rights over one’s own personal image and likeness. For example, Tennessee enacted the Ensuring Likeness, Voice, and Image Security Act of 2024 (ELVIS Act), expanding the state’s statutory right of publicity in two ways. First, it expands existing law to prohibit unauthorized commercial use of an individual’s voice, in addition to the existing restrictions on use of an individual’s name, photograph and likeness. Second, the ELVIS Act creates secondary liability for AI companies and platforms if they knew their tools or service facilitated the use of the person’s voice/name/photo/likeness and that the use was unauthorized.

- Requiring labels or disclaimers for deepfakes. A number of proposals explicitly prohibit distributing deepfakes without disclosure (e.g., Hawaii’s SB 2687, New Hampshire’s HB 1432, South Dakota’s SB 164), especially regarding election-related content. Many of these types of proposals permit deepfakes if disclaimers/disclosures are readily presented with the content.

- Providing carve-outs for satire, parody or journalism. A few proposals provide carve-outs for synthetic media that contain satire or parody (e.g., Illinois’s SB 150, South Dakota’s SB 164), as well as exceptions for news organizations that present synthetic media to audiences as part of official news coverage and disclose that it is non-authentic content.

Impacts on journalism

Proposals that seek to address manipulated content in North America must strike a careful balance between countering disinformation and protecting free speech; “false” information is protected in Canada and the United States. Policy proposals in this area are subject to strict scrutiny, and if they do not maintain this careful balance, they could be misused for censorship, even in democracies.

Legislation like the Take It Down Act, for example, will likely protect journalists’ identities from being recreated in nonconsensual intimate deepfakes; however, experts warn that the removal provision in the act poses serious risks to free speech and could be misused for political or ideological gain.

Similarly, several state-level deepfake laws have already been challenged or blocked in federal court. For example, The Babylon Bee, a satirical website, has filed a lawsuit challenging Hawaii’s SB 2687.

There may be opportunities, as discussed in CNTI’s issue primers, in which deepfakes may serve as identity protection for both sources and journalists. Though there are carve-outs that allow for certain types of synthetic media — if clearly disclosed during official news reporting — further consideration should be given to how journalistic principles and synthetic media fit together.

Labeling and disclosure requirements could be a relatively straightforward way to help the public better understand how AI-generated content is used in journalism, but such requirements could also be considered compelled speech under the United States’ First Amendment and could violate outlets’ editorial independence.

While some legislation includes provisions protecting satire and parody, as well as synthetic media covered by news organizations or used for legitimate reporting, the clauses determining who is eligible for those protections (i.e., what constitutes a news organization) may not cover all the ways journalism is conducted today. For example, Illinois’s SB 150 would protect AI-manipulated political communications content by a “bona fide newscast, news interview, news documentary, or on-the-spot coverage of a bona fide news event” if the AI content is clearly disclosed. However, it is unclear who determines which organizations count, especially since the exception language for this type of content explicitly states “a radio or television broadcasting station, including a cable or satellite television operator, programmer, or producer” is covered but does not necessarily include podcasts or internet sources (e.g., YouTube content creators). If content creators or independent journalists are not included, these bills could be used to limit their reporting.

Laws that require consent, such as Tennessee’s ELVIS Act, may require news organizations to more closely analyze the advertisements they run to avoid being held liable for any voice or image manipulations. This is because the final ELVIS Act increases liability for media companies by including a narrower fair use provision in the final act than what existed in preceding drafts.

As many proposals require disclosure of synthetic media content, news organizations can serve as valuable collaborators along with other stakeholders to design and implement appropriate and effective labels.

Algorithmic discrimination and bias

AI summary: North American proposals address algorithmic discrimination and bias by focusing on consumer and employment protections, safeguarding vulnerable groups and requiring regular bias audits for AI systems. These approaches may affect journalism by requiring news organizations using AI for hiring or content recommendations to conduct bias audits and ensure fairness, while at the same time potentially providing journalists with valuable data for reporting on AI.

Approaches

Several proposals we reviewed in North America consider algorithmic discrimination and bias. These generally fit into the three categories.

- Emphasizing consumer and employment protections. For example, Colorado’s SB24–205 strives to protect people from algorithmic discrimination by placing regulations on “high-risk” AI systems — and their developers and deployers — that make “consequential decisions” such as accessing education, employment, healthcare or insurance resources. Both developers and deployers of these high-risk AI systems in Colorado must practice reasonable care to mitigate algorithmic discrimination, and the legislation also requires risk assessments and annual reviews.1

- Protecting vulnerable populations and groups from algorithmic discrimination and bias. These types of provisions are found in Guam’s Bill No. 64-38, Hawaii’s SB 59, Texas’s HB 149 and Canada’s AIDA (failed). Guam’s proposal seeks to create the “Guam Artificial Intelligence Regulatory Taskforce,” which would be responsible for developing a regulatory framework to prevent algorithmic bias.

- Requiring bias audits related to algorithm usage. New Jersey’s A3855, for example, requires bias audits of “automated employment decision tools” to mitigate the potential negative impacts of algorithmic discrimination. A bias audit in New Jersey would use demographic variables (e.g., income, age, gender, race, ethnicity, religion) to measure scoring and selection rates of the employment tool based on the training data of employers or employment agencies that use the tool. These types of AI employment tools generally require regular bias audits (often yearly).

Impacts on journalism

These proposals may impact journalism in a variety of ways. To start, organizations that use AI systems during the hiring process would likely be impacted. News organizations in New Jersey, for example, would likely be required to produce bias audits demonstrating that their usage of AI complies with regulations and does not adversely harm protected groups.

It is unlikely that these audit proposals would require audits to be made public. In New Jersey, for example, it remains to be seen where and how independent auditors would release the results of their bias audits. That said, on the off chance that the audits are made available to journalists, they could serve as a valuable resource for reporting on AI tools, companies and technological developments.

Regulation on algorithmic discrimination and bias may also affect how news organizations can digitally interact with customers. For example, while New Jersey’s law only monitors automated employment decisions, broader legal approaches might require news organizations to verify that article recommendation algorithms do not disadvantage readers or provide different information based on protected attributes (e.g., race, ethnicity, gender). Advertising decisions that include AI models would also fall into this category.

Intellectual property and copyright

AI summary: Several proposals in North America are considering intellectual property and copyright, with some states clarifying ownership of AI-generated content, upholding existing laws and requiring disclosure of training data. These approaches will influence how news organizations develop their own AI models and how they handle content for training purposes, as ongoing legal battles will determine the future of intellectual property and copyright in the region.

Approaches

Intellectual property and copyright regarding AI developers’ training data has received a lot of attention in the U.S. judicial system. For example, in June 2025, Anthropic won an initial judgement that the company’s scanning of books to train generative AI models constitutes fair use. This ruling sets a precedent that would likely impact the anticipated New York Times and OpenAI case.

On the policy front, there are just a few examples of individual U.S. states considering the relationships between intellectual property and copyright and AI; copyright is usually determined at the federal level in the U.S. These state-level efforts generally fit into three categories.

- Specifying the ownership of AI-generated content. For example, HB 1876 in Arkansas has attempted to clarify who owns material that has been created with generative AI models: “the person who provides the input or directive [owns the generated content] … provided that the content does not infringe on existing copyrights or intellectual property rights.”

- Providing language that upholds existing laws on the topic. For example, Montana’s Right to Compute Act, while innovation-focused, has a provision in Section 5 to uphold “federal and state intellectual property laws” but does not clarify who owns content created by generative AI models.

- Requiring the disclosure of training data and the ownership of that data. These disclosure requirements aim to protect intellectual property. Some of the requirements of AI developers in California’s AB 2013 include public transparency around:

- The sources or owners of the datasets.

- Whether the datasets include any data protected by copyright, trademark, or patent, or whether the datasets are entirely in the public domain. [Note: California’s legislation does not include any penalty for using these types of data.]

- Whether the datasets were purchased or licensed by the developer.

Impacts on journalism

In the U.S., state-level copyright is preempted by federal copyright provisions, so any state-level activity would ultimately be subject to what happens at the federal level. On that front, we are likely to see more consequential activity as ongoing lawsuits and court cases conclude. That being said, the lack of activity could simply be noted as deference to federal law. Separately, a series of papers from the U.S. Copyright Office released in 2024 and 2025 provided recommendations for how U.S. copyright law could be updated to account for generative AI; however, because this report focuses on policies, strategies and legislation, these papers were not included in our analysis.

It remains to be seen whether training models with news organizations’ content is determined to violate intellectual property, copyright and fair use laws, though rulings to date suggest it does not. These types of regulations will likely shape how news organizations can legally develop their own AI models for content recommendation, personalization and content creation. This will largely be based on the types of training data that are available outside the ownership or purview of the news organization, depending on the outcomes of existing lawsuits and court cases. The ongoing legal battles between news organizations and technology companies over AI developers using news content for training purposes will shed light on the central topics of intellectual property and copyright, especially about who owns data and what data can be used to train AI models.

Amid these uncertainties, several news organizations have also signed deals with AI developers to grant access to their news archives, while other news organizations are hesitant to do so. Determining who owns AI-generated content across the region, especially as so few legislative examples exist, is critical. And overall, the region has still not fully grappled with how to value digital content, which CNTI wrote about in detail in our analysis of media remuneration policy.

Transparency and accountability

AI summary: North American AI proposals on transparency and accountability focus on requiring disclosure of AI-generated content, especially in elections, as well as mandating transparency around training data and AI models, algorithmic impact assessments and the development of AI detection tools. These approaches will require news organizations to label AI-generated content, potentially develop AI detection tools and will enable journalists to report on AI systems in more detail due to increased transparency.

Approaches

Transparency and accountability have received a lot of attention in North America during the last several years. The range of transparency considerations is extensive. While nearly all of the proposals we examined are legally-binding legislation (or would be if passed), New Jersey passed a non-binding assembly resolution urging whistleblower protections for employees at AI companies.

The proposals we reviewed generally fit into five categories.

- Requiring disclosure of AI-generated content. Several of these approaches revolve around elections and election communications (e.g., advertising). Several approaches also define acceptable types of disclosure (e.g., SB2687 in Hawaii, SB150 inIllinois, HB1432 in New Hampshire, SB164 in South Dakota), which often require explicit labels or disclaimers denoting AI-generated content. A slightly broader but related approach occurs in Ontario’s Bill 194, which stresses that public service entities need to disclose their use of AI systems (with specific provisions made by the Lieutenant Governor in Council).

While few bills/laws explicitly discuss news organizations and journalism, A5164 in New Jersey and S6748 in New York are two examples of legislation under consideration that name news organizations specifically as being required to label and disclose use of generative AI in their content. New Jersey’s bill defines news media as “newspapers, magazines, press associations, news agencies, wire services, radio, television or other similar printed, photographic, mechanical or electronic means of disseminating news to the general public …”). New York’s bill, on the other hand, does not define news media outright but rather states that every “newspaper, magazine or other publication printed or electronically published in this state [NY]” that includes generative AI content must “conspicuously imprint” (i.e., disclose) information about said AI use.

- Requiring training data and model transparency. For example, California’s AB 2013 and Washington’s HB 1168 outline the required documentation that AI developers need to share about training data for AI systems, including (1) descriptions of the data, (2) whether personal data were included, (3) the time period the data were collected, etc. Relatedly, SB 59 in Hawaii includes a provision requiring anyone who uses AI for an algorithmic eligibility determination — in other words, determines eligibility for an important opportunity (e.g., employment, insurance) using an algorithm — must issue a notice about how personal data are used in those decisions.

- Requiring algorithmic impact assessments, especially for “high-risk systems.” SB24-205 in Colorado states that these assessments should include “a description of any transparency measures taken concerning the high-risk artificial intelligence system, including any measures taken to disclose to a consumer that the high-risk artificial intelligence system is in use …” as a way to satisfy the conditions of the law.

- Requiring disclosure of non-human AI interactions to consumers. HB 516 in Alabama, HP 1154 in Maine and SB 149 in Utah aim to (1) prevent deception in online trade and commerce and (2) strengthen consumer protections. For example, users would need to be notified they are engaging with an AI agent and not a human.

- Requiring development of AI detection tools. California’s AI Transparency Act (SB 942) requires AI providers to release an AI detection tool for users. The law will “require a covered provider, as defined, to make available an artificial intelligence (AI) detection tool at no cost to the user that meets certain criteria, including that the AI detection tool is publicly accessible.” The tool will need to provide users with information about whether content they provide to the tool was altered by the provider’s AI system, thus aiming to increase transparency and accountability.

Impacts on journalism

These types of proposals impact journalism in a number of ways. Disclosure of AI-generated content will require news organizations to incorporate labels prior to publishing. Identifying the most effective types of labels for specific types of content is still an open question and not explicitly outlined in the existing legislation. Based on California’s SB 942, news organizations that provide generative AI tools for public use (e.g., chatbots trained on their own content) would be required to develop a detection tool if the organization reaches the threshold of 1 million monthly users.

Mandatory labeling also carries implications for free speech in the sense that requiring labels could be considered compelled speech. In other words, these requirements could be interpreted as the government telling individuals (i.e., news organizations and journalists) what they must say, which would be a violation of the U.S.’s First Amendment.

Proposals that include transparency and accountability decisions will also allow journalists to report on AI systems in more detail. For example, increased transparency about training data allows for greater evaluation of AI models and their outputs.

The two proposals we examined that directly focus on news organizations (A5164 in New Jersey and S6748 in New York) carry implications for common journalistic tasks. One of these is journalists’ use of AI transcription and translation, which can be considered generative AI, depending on the specific tool used. In New Jersey’s bill, for example, these uses would need to be disclosed to audiences. Journalists and newsrooms will likely need to further consider how much (and what) information needs to be provided to audiences if, for example, generative AI was used early in the reporting process to transcribe and summarize meetings.

Lastly, the impacts will be shaped by whether the proposals fall more heavily on developers or deployers. For example, California’s AB 2013 and Washington’s HB 1168 place transparency requirements on AI developers, which should enable greater journalistic coverage of AI systems. On the other hand, legislation that focuses on deployers would apply to news organizations and journalists that use AI systems, holding them responsible for the outputs of these systems. There are examples, though, of approaches that include a focus on both, like Colorado’s SB24–205, which outlines transparency requirements when using high-risk AI systems.

Data protection and privacy

AI summary: North American AI proposals on data protection and privacy focus on requiring personal data to be de-identified and ensuring secure handling of anonymized data. These approaches will require news organizations to comply with new regulations if they use personal data in their AI systems. They might also make it harder for journalists to evaluate AI systems if training data are protected.

Approaches

Several proposals consider the implications of data protection and privacy when building and deploying AI. The proposals we reviewed generally fit into the following categories.

- Requiring personal data to be deidentified. There are several provisions for protecting “personal provenance data,” such as SB 942 in California, and personal information, such as SB 59 in Hawaii. These include any metrics or information that can be traced back to an individual user. Relatedly, Utah’s 2024 SB 149 (whose expiration date was extended in 2025 by SB 332), includes “deidentified data” to prevent personal data from being used to identify individuals.

- Requiring secure handling and processing of anonymized data. Canada’s AIDA outlined requirements for those processing and/or working with anonymized data which include (1) how the data are anonymized, (2) how the data are used and (3) how the data are managed.

Impacts on journalism

These approaches impact journalism in several ways. News organizations may need to comply with regulations if they are developing AI systems using personal data, although most current laws focus primarily on regulated industries. There will also be limits on how data (and what types of data) can be shared between news organizations should they collaborate to develop and deploy AI systems for their specific needs.

Requirements for data protections are likely useful for protecting individual privacy, but journalists may encounter difficulty when examining and investigating AI systems if the training and testing data are protected due to the use of personal data. Learning what types of deidentified data are acceptable for accessing AI systems can yield an opportunity for journalists to evaluate training data without handling personal information.

Public information and awareness

AI summary: North American AI legislation regarding public awareness mainly involves creating public campaigns, training government employees and funding educational programs, but these efforts rarely include journalists. Journalists could play a key role in raising public awareness about AI and would benefit from learning about AI systems to better report on technological developments.

Approaches

There are few examples of legislation in North America that explicitly focus on public information and public awareness, but S. 1699 in the U.S. Congress is noteworthy. The proposals we reviewed generally fit the following categories.

- Developing public awareness campaigns. The U.S. Senate has introduced S. 1699, which would create a “public awareness, education, and consumer literacy campaign” to provide information about AI technologies. The Secretary of Commerce would be responsible for overseeing the campaign, which would also include a requirement to measure public literacy on AI technologies.

- Creating training programs for certain government employees. Texas’s Responsible AI Governance Act places an emphasis on “training programs for state agencies and local governments on the use of artificial intelligence systems.” The scope of training programs does not extend to media organizations more broadly, or beyond government.

- Providing funding for AI courses and training. This includes educator training resources for educational institutions. These efforts are found in several U.S. states and range from creating commissions to study the impacts of AI in the classroom, such as in HCR 66 in Louisiana, to funding the development of a state pilot program that will create an AI tool for classroom instruction and train the educators who will use said tool, like in Sections 143 and 144 of Connecticut’s HB 5524. However, the scope of these educational programs does not extend to journalists or news organizations.

Impacts on journalism

Journalists may have a role to play in raising public awareness — particularly as potential leaders in a national AI awareness and literacy campaign as outlined in the U.S. bill S. 1699. Journalists will thus need access to information about AI systems and models to build audience knowledge and understanding of these technological developments.

While several states’ proposals stress the importance of public awareness of AI in educational settings, the current examples of education provisions that aim to increase awareness do not include journalists specifically. Yet, there may be opportunities for news organizations to partner with schools to promote education initiatives for school-aged individuals and the general public more broadly.

Outside of S. 1699 in the U.S. Congress, which proposes a national AI awareness campaign and highlights the AI topics the public can learn about (e.g., machine translation, content provenance), the legislation we examined did not provide a clear description of what information is important for the public to know. Still, public awareness campaigns help journalists learn about AI systems. This can assist them in their professional role of delivering information to the public as well as in reporting on developments in AI and automation technologies.

Conclusion

AI legislation has received a tremendous amount of attention in the United States over the last two years, driven in large part by state activity. Less legislation is being considered in Canada; however, it is difficult to compare the two countries given disparities in population and the number of U.S. states versus Canadian provinces and territories. Discussions in both countries are beginning to include important concepts related to journalism, namely transparency, accountability, data protection and free expression; however, they rarely name journalism explicitly, with a few noted exceptions in New Jersey and New York. Whether named directly or not, it is important that policymakers consider the ways legislation could harm an independent news media and an open internet.

Within the journalism community itself, there are opportunities to set standards on transparency around use of AI-generated content. There are also several examples of provisions that protect journalistic coverage of deepfakes. Yet, there are also shortcomings in the legislation outlined above. All in all, the existing patchwork of approaches risks inconsistency in data protection, disclosure requirements and protections for freedom of speech/expression.

Federal and state legislation in the United States is an area to watch in the near future. It is also worth keeping an eye on future developments in Canada, especially given the country recently formed a new government that might have a different take on AI legislation. Early signs point to Canada presenting an updated AI regulatory framework in the near future that takes copyright into consideration.

Share